2.5: Finding Accurate Sources of Nutrition Information

- Page ID

- 39952

As we discussed in the previous section, science is always evolving, albeit sometimes slowly. One study is not enough to make a guideline or recommendation or to cure a disease. Science is a stepwise process that continuously builds on past evidence and develops towards a well-accepted consensus, although even that can be questioned as new evidence emerges. Unfortunately, the way scientific findings are communicated to the general public can sometimes be inaccurate or confusing. In today’s world, where instant Internet access is just a click away, it’s easy to be overwhelmed or misled if you don’t know where to go for reliable nutrition information. Therefore, it’s important to know how to find accurate sources of nutrition information and how to interpret nutrition-related stories when you see them.

Deciphering Nutrition Information

“New study shows that margarine contributes to arterial plaque.”

“Asian study reveals that two cups of coffee per day can have detrimental effects on the nervous system.”

How do you react when you read headlines like this? Do you boycott margarine and coffee? When reading nutrition-related claims, articles, websites, or advertisements, always remember that one study neither proves or disproves anything. Readers who may be looking for answers to complex nutrition questions can quickly misconstrue such statements and be led down a path of misinformation, especially if the information is coming from a source that isn’t credible. Listed below are ways that you can develop a discerning eye when reading news highlighting nutrition science and research.

- The scientific study under discussion should be published in a peer-reviewed journal. Having gone through the peer review process, these studies have been checked by other experts in the field to ensure that their methods and analysis were rigorous and appropriate. Peer-reviewed articles also include a review of previous research findings on the topic of study and examine how their current findings relate to, support, or are in contrast to previous research. Question studies that come from less trustworthy sources (such as non peer-reviewed journals or websites) or that are not formally published.

- The report should disclose the methods used by the researcher(s).

- Identify the type of study and where it sits on the hierarchy of evidence. Keep in mind that a study in humans is likely more meaningful than one that’s in vitro or in animals; an intervention study is usually more meaningful than an observational study; and systematic reviews and meta-analyses often give you the best synthesis of the science to date.

- If it’s an intervention study, check for some of the attributes of high-quality research already discussed: randomization, placebo control, and blinding. If it’s missing any of those, what questions does that raise for you?

- Did the study last for three weeks or three years? Depending on the research question, studies that are short may not be long enough to establish a true relationship with the issues being examined.

- Were there ten or two hundred participants? If the study was conducted on only a few participants, it’s less likely that the results would be valid for a larger population.

- What did the participants actually do? It’s important to know if the study included conditions that people rarely experience or if the conditions replicated real-life scenarios. For example, a study that claims to find a health benefit of drinking tea but required participants to drink 15 cups per day may have little relevance in the real world.

- Did the researcher(s) observe the results themselves, or did they rely on self reports from program participants? Self-reported data and results can be easily skewed by participants, either intentionally or by accident.

- The article should include details on the subjects (or participants) in the study. Did the study include humans or animals? If human, are any traits/characteristics noted? You may realize you have more in common with certain study participants and can use that as a basis to gauge if the study applies to you.

- Statistical significance is not the same as real-world significance. A statistically significant result is likely to have not occurred by chance, but rather to be a real difference. However, this doesn’t automatically mean that the difference is relevant in the real world. For example, imagine a study reporting that a new vitamin supplement causes a statistically significant reduction in the duration of the common cold. Colds can be miserable, so that sounds great, right? But what if you look closer and see that the supplement only shortened study subjects’ colds by half a day? You might decide that it isn’t worth taking a supplement just to shorten a cold by half a day. In other words, it’s not a real-world benefit to you.

- Credible reports should disseminate new findings in the context of previous research. A single study on its own gives you very limited information, but if a body of literature (previously published studies) supports a finding, it adds credibility to the study. A news story about a new scientific finding should also include comments from outside experts (people who work in the same field of research but weren’t involved in the new study) to provide some context for what the study adds to the field, as well as its limitations.

- When reading such news, ask yourself, “Is this making sense?” Even if coffee does adversely affect the nervous system, do you drink enough of it to see any negative effects? Remember, if a headline professes a new remedy for a nutrition-related topic, it may well be a research-supported piece of news, but it could also be a sensationalized story designed to catch the attention of an unsuspecting consumer. Track down the original journal article to see if it really supports the conclusions being drawn in the news report.

The CRAAPP Test

While there is a wealth of information about nutrition on the internet and in books and magazines, it can be challenging to separate the accurate information from the hype and half-truths. You can use the CRAAPP Test1,2 to help you determine the validity of the resources you encounter and the information they provide. By applying the following principles, you can be confident that the information is credible. We’ve added several notes to the traditional CRAAPP Test to help you expand your analysis3 and apply it to nutrition information.

| CRAAPP Test Principle | Questions to ask |

|

Currency |

When was it written or published? Has the website been updated recently? Do you need current information, or will older sources meet your research need? Where is your topic in the information cycle? Note: In general, newer articles are more likely to provide up-to-date perspectives on nutrition science, so as a starting point, look for those published in the last 5-7 years. However, it depends on the question that you’re researching. In some areas, nutrition science hasn’t changed much in recent years, or you may be interested in historical background on the question. In either case, an older article would be appropriate. |

|

Relevance |

Does it meet stated requirements of your assignment? Does it meet your information needs/answer your research question? Is the information at an appropriate level or for your intended audience? |

|

Authority |

Who is the creator/author/publisher/source/sponsor? Are they reputable? What are the author’s credentials and their affiliations to groups, organizations, agencies or universities? What type of authority does the creator have? For example, do they have subject expertise (scholar), social position (public office, title), or special experience? Note: The authority on nutrition information would be a registered dietitian nutritionist (RDN), a professional with advanced degree(s) in nutrition (MS or PhD), or a physician with appropriate education and expertise in nutrition. (This will be discussed in more detail on the next page). Look for sources authored or reviewed by experts with this level of authority or written by people who seek out and include their expertise in the article. |

|

Accuracy |

Is the information reliable, truthful, and correct? Does the creator cite sources for data or quotations? Who did they cite? Are they cherry-picking facts to support their argument? Is the source peer-reviewed, or reviewed by an editor? Do other sources support the information presented? Are there spelling, grammar, and typo errors that demonstrate inaccuracy? Note: Oftentimes, checking the accuracy of information in a given article or website means opening a new internet tab and doing some additional sleuthing to check the claims against other sources. |

|

Purpose |

Is the intent of the website to inform, persuade, entertain, or sell something? Does the point of view seem impartial or biased? Is the content primarily opinion? Is it balanced with other viewpoints? Who is the intended audience? Note: Particularly if you’re looking at an organization’s website, do some background research on the organization to see who funds it and what is the purpose of the group. That information can help you determine if their point-of-view is likely to be biased. |

|

Process |

What kind of effort was put into the creation and delivery of this information? Is it a Tweet? A blog post? A YouTube video? A press release? Was it researched, revised, or reviewed by others before published? How does this format fit your information needs or requirements of assignment? |

Table 2.1. The CRAAPP Test is a six-letter mnemonic device for evaluating the credibility and validity of information found through various sources, including websites and social media channels. The CRAAPP Test can be particularly useful in evaluating nutrition related news and articles.

VIDEO: “How Library Stuff Works: How to Evaluate Resources (the CRAAP Test)” by McMaster Libraries, YouTube (January 23, 2015), 2:09.

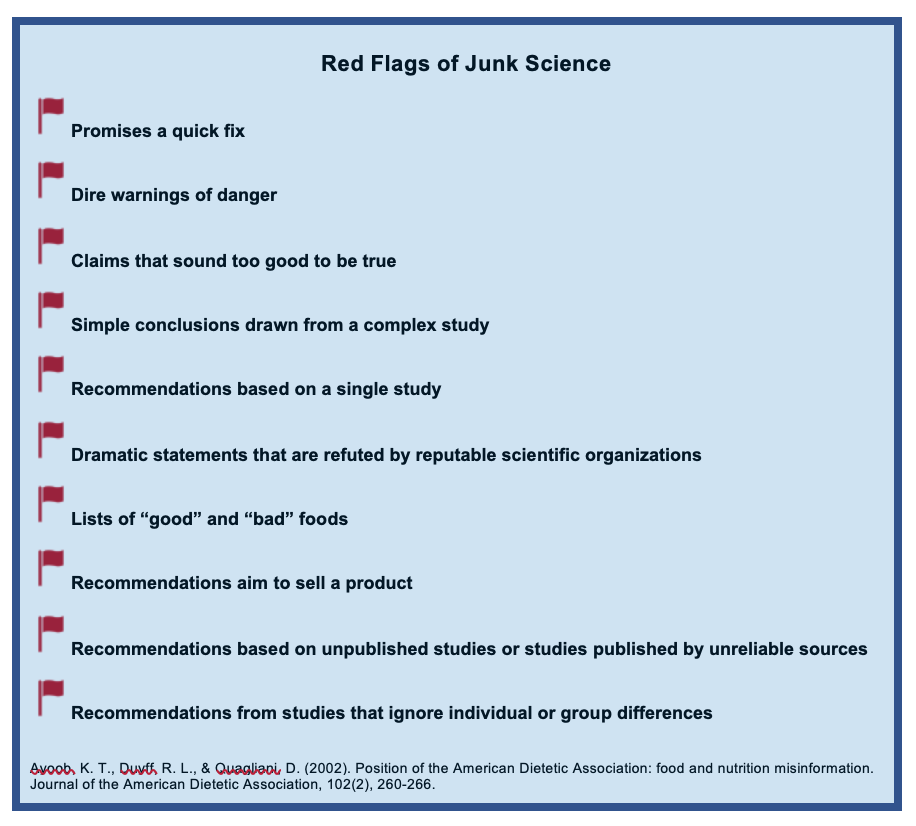

Red Flags of Junk Science

When it comes to nutrition advice, the adage holds true that “if it sounds too good to be true, it probably is.” There are several tell-tale signs of junk science—untested or unproven claims or ideas usually meant to push an agenda or promote special interests. In addition to using the CRAAPP Test to decipher nutrition information, you can also use these simple guidelines to spot red flags of junk science. When you see one or more of these red flags in an article or resource, it’s safe to say you should at least take the information with a grain of salt, if not avoid it altogether.

With the mass quantities of nutrition articles and stories circulating in media outlets each week, it’s easy to feel overwhelmed and unsure of what to believe. But by using the tips outlined above, you’ll be armed with the tools needed to decipher every story you read and decide for yourself how it applies to your own nutrition and health goals.

Attributions:

- Lindshield, B. L. Kansas State University Human Nutrition (FNDH 400) Flexbook. goo.gl/vOAnR, CC BY-NC-SA 4.0

- University of Hawai‘i at Mānoa Food Science and Human Nutrition Program, “Types of Scientific Studies,” CC BY-NC 4.0

- “Principles of Nutrition Textbook, Second Edition” by University System of Georgia is licensed under CC BY-NC-SA 4.0

References:

- 1Blakeslee, Sarah (2004). The CRAAP Test, LOEX Quarterly, 31(3)6-7. https://commons.emich.edu/loexquarterly/vol31/iss3/4

- 2Lumen Learning. (n.d.) The CRAAPP Test. Introduction to College Research. https://courses.lumenlearning.com/atd-fscj-introtoresearch/chapter/the-craapp-test/

- 3Fielding, J.A. (2019). Rethinking CRAAP: Getting students thinking like fact-checkers in evaluating web sources. C&RL News, December: 620-622.

Image Credits:

- Real experts book photo by Rita Morais on Unsplash (license information)

- Figure 2.7. An example of a peer-reviewed journal photo by Heather Leonard is licensed under CC BY 4.

- Table 2.1. The CRAAPP Test by Heather Leonard is licensed under CC BY 4.

- Figure 2.8. “The Red Flags of Junk Science” by Heather Leonard is licensed under CC BY 4.