2.1: Introduction

- Page ID

- 12563

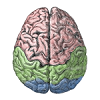

One major reason the brain can be so plastic and learn to do so many different things, is that it is made up of a highly-sculptable form of silly putty: billions of individual neurons that are densely interconnected with each other, and capable of shaping what they do by changing these patterns of interconnections. The brain is like a massive LEGO set, where each of the individual pieces is quite simple (like a single LEGO piece), and all the power comes from the nearly infinite ways that these simple pieces can be recombined to do different things.

So the good news for you the student is, the neuron is fundamentally simple. Lots of people will try to tell you otherwise, but as you'll see as you go through this book, simple neurons can account for much of what we know about how the brain functions. So, even though they have a lot of moving parts and you can spend an entire career learning about even just one tiny part of a neuron, we strongly believe that all this complexity is in the service of a very simple overall function.

What is that function? Fundamentally, it is about detection. Neurons receive thousands of different input signals from other neurons, looking for specific patterns that are "meaningful" to them. A very simple analogy is with a smoke detector, which samples the air and looks for telltale traces of smoke. When these exceed a specified threshold limit, the alarm goes off. Similarly, the neuron has a threshold and only sends an "alarm" signal to other neurons when it detects something significant enough to cross this threshold. The alarm is called an action potential or spike and it is the fundamental unit of communication between neurons.

Our goal in this chapter is to understand how the neuron receives input signals from other neurons, integrates them into an overall signal strength that is compared against the threshold, and communicates the result to other neurons. We will see how these processes can be characterized mathematically in computer simulations (summarized in Figure 2.1). In the rest of the book, we will see how this simple overall function of the neuron ultimately enables us to perceive the world, to think, to communicate, and to remember.

Math warning: This chapter and the Learning Mechanisms Chapter are the only two in the entire book with significant amounts of math (because these two chapters describe in detail the equations for our simulations). We have separated the conceptual from the mathematical content, and those with an aversion to math can get by without understanding all the details. So, don't be put off or overwhelmed by the math here!