4.5: The Leabra Framework

- Page ID

- 12584

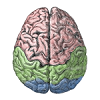

Figure 4.13 provides a summary of the Leabra framework, which is the name given to the combination of all the neural mechanisms that have been developed to this point in the text. Leabra stands for Learning in an Error-driven and Associative, Biologically Realistic Algorithm -- the name is intended to evoke the "Libra" balance scale, where in this case the balance is reflected in the combination of error-driven and self-organizing learning ("associative" is another name for Hebbian learning). It also represents a balance between low-level, biologically-detailed models, and more abstract computationally-motivated models. The biologically-based way of doing error-driven learning requires bidirectional connectivity, and the Leabra framework is relatively unique in its ability to learn complex computational tasks in the context of this pervasive bidirectional connectivity. Also, the FFFB inhibitory function producing k-Winners-Take-All dynamics is unique to the Leabra framework, and is also very important for its overall behavior, especially in managing the dynamics that arise with the bidirectional connectivity.

The different elements of the Leabra framework are therefore synergistic with each other, and as we have discussed, highly compatible with the known biological features of the neocortex. Thus, the Leabra framework provides a solid foundation for the cognitive neuroscience models that we explore next in the second part of the text.

For those already familiar with the Leabra framework, see Leabra Details for a brief review of how the XCAL version of the algorithm described here differs from the earlier form of Leabra described in the original CECN textbook (O'Reilly & Munakata, 2000).

Exploration of Leabra

Open the Family Trees simulation to explore Leabra learning in a deep multi-layered network running a more complex task with some real-world relevance. This simulation is very interesting for showing how networks can create their own similarity structure based on functional relationships, refuting the common misconception that networks are driven purely by input similarity structure.