6.1: Introduction

- Page ID

- 12596

Perception is at once obvious and mysterious. It is so effortless to us that we have little appreciation for all the amazing computation that goes on under the hood. And yet we often use terms like "vision" as a metaphor for higher-level concepts (does the President have a vision or not?) -- perhaps this actually reflects a deep truth: that much of our higher-level cognitive abilities depend upon our perceptual processing systems for doing a lot of the hard work. Perception is not the mere act of seeing, but is leveraged whenever we imagine new ideas, solutions to hard problems, etc. Many of our most innovative scientists (e.g., Einstein, Richard Feynman) used visual reasoning processes to come up with their greatest insights. Einstein tried to visualize catching up to a speeding ray of light (in addition to trains stretching and contracting in interesting ways), and one of Feynman's major contributions was a means of visually diagramming complex mathematical operations in quantum physics.

Pedagogically, perception serves as the foundation for our entry into cognitive phenomena. It is the most well-studied and biologically grounded of the cognitive domains. As a result, we will cover only a small fraction of the many fascinating phenomena of perception, focusing mostly on vision. But we do focus on a core set of issues that capture many of the general principles behind other perceptual phenomena.

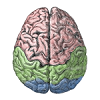

We begin with a computational model of primary visual cortex (V1), which shows how self-organizing learning principles can explain the origin of oriented edge detectors, which capture the dominant statistical regularities present in natural images. This model also shows how excitatory lateral connections can result in the development of topography in V1 -- neighboring neurons tend to encode similar features, because they have a tendency to activate each other, and learning is determined by activity.

Building on the features learned in V1, we explore how higher levels of the ventral what pathway can learn to recognize objects regardless of considerable variability in the superficial appearance of these objects as they project onto the retina. Object recognition is the paradigmatic example of how a hierarchically-organized sequence of feature category detectors can incrementally solve a very difficult overall problem. Computational models based on this principle can exhibit high levels of object recognition performance on realistic visual images, and thus provide a compelling suggestion that this is likely how the brain solves this problem as well.

Next, we consider the role of the dorsal where (or how) pathway in spatial attention. Spatial attention is important for many things, including object recognition when there are multiple objects in view -- it helps focus processing on one of the objects, while degrading the activity of features associated with the other objects, reducing potential confusion. Our computational model of this interaction between what and where processing streams can account for the effects of brain damage to the wherepathway, giving rise to hemispatial neglect for damage to only one side of the brain, and a phenomenon called Balint's syndrome with bilateral damage. This ability to account for both neurologically intact and brain damaged behavior is a powerful advantage of using neurally-based models.

As usual, we begin with a review of the biological systems involved in perception.