6.2: Biology of Preception

- Page ID

- 12597

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\( \newcommand{\dsum}{\displaystyle\sum\limits} \)

\( \newcommand{\dint}{\displaystyle\int\limits} \)

\( \newcommand{\dlim}{\displaystyle\lim\limits} \)

\( \newcommand{\id}{\mathrm{id}}\) \( \newcommand{\Span}{\mathrm{span}}\)

( \newcommand{\kernel}{\mathrm{null}\,}\) \( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\) \( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\) \( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\id}{\mathrm{id}}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\kernel}{\mathrm{null}\,}\)

\( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\)

\( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\)

\( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\) \( \newcommand{\AA}{\unicode[.8,0]{x212B}}\)

\( \newcommand{\vectorA}[1]{\vec{#1}} % arrow\)

\( \newcommand{\vectorAt}[1]{\vec{\text{#1}}} % arrow\)

\( \newcommand{\vectorB}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vectorC}[1]{\textbf{#1}} \)

\( \newcommand{\vectorD}[1]{\overrightarrow{#1}} \)

\( \newcommand{\vectorDt}[1]{\overrightarrow{\text{#1}}} \)

\( \newcommand{\vectE}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash{\mathbf {#1}}}} \)

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\(\newcommand{\longvect}{\overrightarrow}\)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\(\newcommand{\avec}{\mathbf a}\) \(\newcommand{\bvec}{\mathbf b}\) \(\newcommand{\cvec}{\mathbf c}\) \(\newcommand{\dvec}{\mathbf d}\) \(\newcommand{\dtil}{\widetilde{\mathbf d}}\) \(\newcommand{\evec}{\mathbf e}\) \(\newcommand{\fvec}{\mathbf f}\) \(\newcommand{\nvec}{\mathbf n}\) \(\newcommand{\pvec}{\mathbf p}\) \(\newcommand{\qvec}{\mathbf q}\) \(\newcommand{\svec}{\mathbf s}\) \(\newcommand{\tvec}{\mathbf t}\) \(\newcommand{\uvec}{\mathbf u}\) \(\newcommand{\vvec}{\mathbf v}\) \(\newcommand{\wvec}{\mathbf w}\) \(\newcommand{\xvec}{\mathbf x}\) \(\newcommand{\yvec}{\mathbf y}\) \(\newcommand{\zvec}{\mathbf z}\) \(\newcommand{\rvec}{\mathbf r}\) \(\newcommand{\mvec}{\mathbf m}\) \(\newcommand{\zerovec}{\mathbf 0}\) \(\newcommand{\onevec}{\mathbf 1}\) \(\newcommand{\real}{\mathbb R}\) \(\newcommand{\twovec}[2]{\left[\begin{array}{r}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\ctwovec}[2]{\left[\begin{array}{c}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\threevec}[3]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\cthreevec}[3]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\fourvec}[4]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\cfourvec}[4]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\fivevec}[5]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\cfivevec}[5]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\mattwo}[4]{\left[\begin{array}{rr}#1 \amp #2 \\ #3 \amp #4 \\ \end{array}\right]}\) \(\newcommand{\laspan}[1]{\text{Span}\{#1\}}\) \(\newcommand{\bcal}{\cal B}\) \(\newcommand{\ccal}{\cal C}\) \(\newcommand{\scal}{\cal S}\) \(\newcommand{\wcal}{\cal W}\) \(\newcommand{\ecal}{\cal E}\) \(\newcommand{\coords}[2]{\left\{#1\right\}_{#2}}\) \(\newcommand{\gray}[1]{\color{gray}{#1}}\) \(\newcommand{\lgray}[1]{\color{lightgray}{#1}}\) \(\newcommand{\rank}{\operatorname{rank}}\) \(\newcommand{\row}{\text{Row}}\) \(\newcommand{\col}{\text{Col}}\) \(\renewcommand{\row}{\text{Row}}\) \(\newcommand{\nul}{\text{Nul}}\) \(\newcommand{\var}{\text{Var}}\) \(\newcommand{\corr}{\text{corr}}\) \(\newcommand{\len}[1]{\left|#1\right|}\) \(\newcommand{\bbar}{\overline{\bvec}}\) \(\newcommand{\bhat}{\widehat{\bvec}}\) \(\newcommand{\bperp}{\bvec^\perp}\) \(\newcommand{\xhat}{\widehat{\xvec}}\) \(\newcommand{\vhat}{\widehat{\vvec}}\) \(\newcommand{\uhat}{\widehat{\uvec}}\) \(\newcommand{\what}{\widehat{\wvec}}\) \(\newcommand{\Sighat}{\widehat{\Sigma}}\) \(\newcommand{\lt}{<}\) \(\newcommand{\gt}{>}\) \(\newcommand{\amp}{&}\) \(\definecolor{fillinmathshade}{gray}{0.9}\)Our goal in this section is to understand just enough about the biology to get an overall sense of how information flows through the visual system, and the basic facts about how different parts of the system operate. This will serve to situate the models that come later, which provide a much more complete picture of each step of information processing.

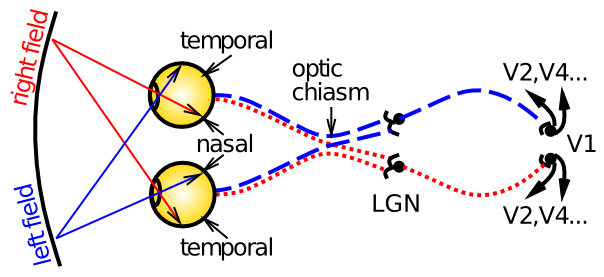

Figure 6.1 shows the basic optics and transmission pathways of visual signals, which come in through the retina, and progress to the lateral geniculate nucleus of the thalamus (LGN), and then to primary visual cortex (V1). The primary organizing principles at work here, and in other perceptual modalities and perceptual areas more generally, are:

- Transduction of different information -- in the retina, photoreceptors are sensitive to different wavelengths of light (red = long wavelengths, green = medium wavelengths, and blue = short wavelengths), giving us color vision, but the retinal signals also differ in their spatial frequency (how coarse or fine of a feature they detect -- photoreceptors in the central fovea region can have high spatial frequency = fine resolution, while those in the periphery are lower resolution), and in their temporal response (fast vs. slow responding, including differential sensitivity to motion).

- Organization of information in a topographic fashion -- for example, the left vs. right visual fields are organized into the contralateral hemispheres of cortex -- as the figure shows, signals from the left part of visual space are routed to the right hemisphere, and vice-versa. Information within LGN and V1 is also organized topographically in various ways. This organization generally allows similar information to be contrasted, producing an enhanced signal, and also grouped together to simplify processing at higher levels.

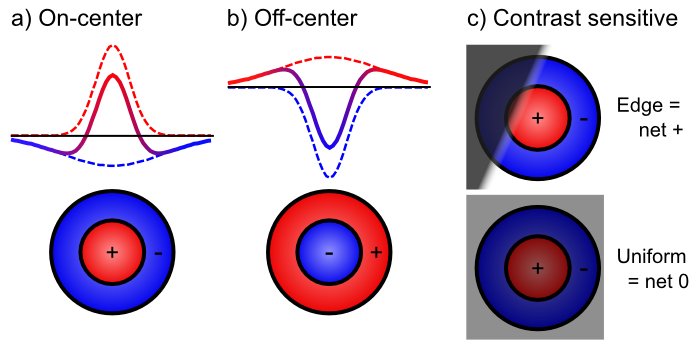

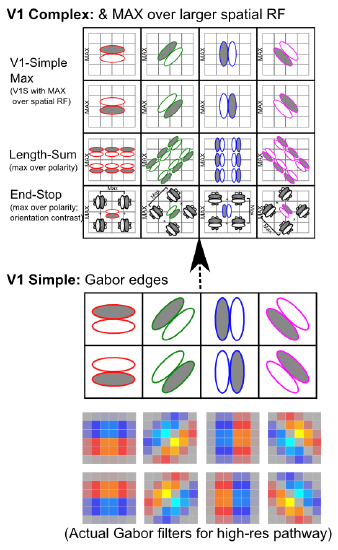

- Extracting relevant signals, while filtering irrelevant ones -- Figure 6.2 shows how retinal cells respond only to contrast, not uniform illumination, by using center-surround receptive fields (e.g., on-center, off-surround, or vice-versa). Only when one part of this receptive field gets different amounts of light compared to the others do these neurons respond. Typically this arises with edges of contrast, where illumination transitions between light and dark, as shown in the figure -- these transitions are the most informative aspects of an image, while regions of constant illumination can be safely ignored. Figure 6.3 shows how these center-surround signals (which are present in the LGN as well) can be integrated together in V1 simple cells to detect the orientation of these edges -- these edge detectors form the basic vocabulary for describing images in V1. It should be easy to see how more complex shapes can then be constructed from these basic line/edge elements. V1 also contains complex cells that build upon the simple cell responses (Figure 6.4), providing a somewhat richer basic vocabulary. The following videos show how we know what these receptive fields look like:

- Classic Hubel & Wiesel V1 receptive field mapping using old school projector stimuli: http://www.youtube.com/watch?v=KE952yueVLA

- Newer reverse correlation V1 receptive field mapping: http://www.youtube.com/watch?v=n31XBMSSSpI

In the auditory pathway, the cochlear membrane plays an analogous role to the retina, and it also has a topographic organization according to the frequency of sounds, producing the rough equivalent of a fourier transformation of sound into a spectrogram. This basic sound signal is then processed in auditory pathways to extract relevant patterns of sound over time, in much the same way as occurs in vision.

Moving up beyond the primary visual cortex, the perceptual system provides an excellent example of the power of hierarchically organized layers of neural detectors, as we discussed in the Networks Chapter. Figure 6.5 shows the anatomical connectivity patterns of all of the major visual areas, starting from the retinal ganglion cells (RGC) to LGN to V1 and on up. The specific patterns of connectivity allow a hierarchical structure to be extracted, as shown, even though there are many interconnections outside of a strict hierarchy as well.

Figure 6.6 puts these areas into their anatomical locations, showing more clearly a what vs where (ventral vs dorsal) split in visual processing. The projections going in a ventral direction from V1 to V4 to areas of inferotemporal cortex (IT) (TE, TEO, labeled as PIT for posterior IT in the previous figure) are important for recognizing the identity ("what") of objects in the visual input, while those going up through parietal cortex extract spatial ("where") information, including motion signals in area MT and MST. We will see later in this chapter how each of these visual streams of processing can function independently, and also interact together to solve important computational problems in perception.

Here is a quick summary of the flow of information up the what side of the visual pathway (pictured on the right side of Figure 6.5):

- V1 -- primary visual cortex, which encodes the image in terms of oriented edge detectors that respond to edges (transitions in illumination) along different angles of orientation. We will see in the first simulation in this chapter how these edge detectors develop through self-organizing learning, driven by the reliable statistics of natural images.

- V2 -- secondary visual cortex, which encodes combinations of edge detectors to develop a vocabulary of intersections and junctions, along with many other basic visual features (e.g., 3D depth selectivity, basic textures, etc), that provide the foundation for detecting more complex shapes. These V2 neurons also encode these features in a broader range of locations, starting a process that ends up with IT neurons being able to recognize an object regardless of where it appears in the visual field (i.e., invariant object recognition).

- V4 -- detects more complex shape features, over an even larger range of locations (and sizes, angles, etc).

- IT-posterior (PIT) -- detects entire object shapes, over a wide range of locations, sizes, and angles. For example, there is an area near the fusiform gyrus on the bottom surface of the temporal lobe, called the fusiform face area (FFA), that appears especially responsive to faces. As we saw in the Networks Chapter, however, objects are encoded in distributed representations over a broad range of areas in IT.

- IT-anterior (AIT) -- this is where visual information becomes extremely abstract and semantic in nature -- it can encode all manner of important information about different people, places and things.

In contrast, the where aspect of visual processing going up in a dorsal directly through the parietal cortex (areas MT, VIP, LIP, MST) contains areas that are important for processing motion, depth, and other spatial features.