9.1: Introduction

- Page ID

- 12615

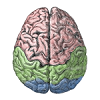

Language involves almost every part of the brain, as covered in other chapters in the text:

- Perception and Attention: language requires the perception of words from auditory sound waves, and written text. Attention is critical for pulling out individual words on the page, and individual speakers in a crowded room. In this chapter, we see how a version of the object recognition model from the perception chapter can perform written word recognition, in a way that specifically leverages the spatial invariance property of this model.

- Motor control: Language production obviously requires motor output in the form of speech, writing, etc. Fluent speech depends on an intact cerebellum, and the basal ganglia have been implicated in a number of linguistic phenomena.

- Learning and Memory: early word learning likely depends on episodic memory in the hippocampus, while longer-term memory for word meaning depends on slow integrated learning in the cortex. Memory for recent topics of discourse and reading (which can span months in the case of reading a novel) likely involves the hippocampus and sophisticated semantic representations in temporal cortex.

- Executive Function: language is a complex mental facility that depends critically on coordination and working memory from the prefrontal cortex (PFC) and basal ganglia -- for example encoding syntactic structures over time, pronoun binding, and other more transient forms of memory.

One could conclude from this that language is not particularly special, and instead represents a natural specialization of domain general cognitive mechanisms. Of course, people have specialized articulatory apparatus for producing speech sounds, which are not shared by other primate species, but one could argue that everything on top of this is just language infecting pre-existing cognitive brain structures. Certainly reading and writing is too recent to have any evolutionary adaptations to support it (but it is also the least "natural" aspect of language, requiring explicit schooling, compared to the essentially automatic manner in which people absorb spoken language).

But language is fundamentally different from any other cognitive activity in a number of important ways:

- Symbols -- language requires thought to be reduced to a sequence of symbols, transported across space and time, to be reconstructed in the receiver's brain.

- Syntax -- language obeys complex abstract regularities in the ordering of words and letters/phonemes.

- Temporal extent and complexity -- language can unfold over a very long time frame (e.g., Tolstoy's War and Peace), with a level of complexity and richness conveyed that far exceeds any naturally occurring experiences that might arise outside of the linguistic environment. If you ever find yourself watching a movie on an airplane without the sound, you'll appreciate that visual imagery represents the lesser half of most movie's content (the interesting ones anyway).

- Generativity -- language is "infinite" in the sense that the number of different possible sentences that could be constructed is so large as to be effectively infinite. Language is routinely used to express new ideas. You may find some of those here.

- Culture -- much of our intelligence is imparted through cultural transmission, conveyed through language. Thus, language shapes cognition in the brain in profound ways.

The "special" nature of language, and its dependence on domain-general mechanisms, represent two poles in the continuum of approaches taken by different researchers. Within this broad span, there is plenty of room for controversy and contradictory opinions. Noam Chomsky famously and influentially theorized that we are all born with an innate universal grammar, with language learning amounting to discovering the specific parameters of that language instance. On the other extreme, connectionist language modelers such as Jay McClelland argue that completely unstructured, generic neural mechanisms (e.g., backpropagation networks) are sufficient for explaining (at least some of) the special things about language.

Our overall approach is clearly based in the domain-general approach, given that the same general-purpose neural mechanisms used to explore a wide range of other cognitive phenomena are brought to bear on language here. However, we also think that certain features of the PFC / basal ganglia system play a special role in symbolic, syntactic processing. At present, these special contributions are only briefly touched upon here, and elaborated just a bit more in the executive function chapter, but future plans call for further elaboration. One hint at these special contributions comes from mirror neurons discovered in the frontal cortex of monkeys, in an area thought to be analogous to Broca's area in humans -- these neurons appear to encode the intentions of actions performed by other people (or monkeys), and thus may constitute a critical capacity to understand what other people are trying to communicate.

We start as usual with a biological grounding to language, in terms of particularly important brain areas and the biology of speech. Then we look at the basic perceptual and motor pathways in the context of reading, including an account of how damage to different areas can give rise to distinctive patterns of acquired dyslexia. We explore a large-scale reading model, based on our object recognition model from the perception chapter, that is capable of pronouncing the roughly 3,000 monosyllabic words in English, and generalizing this knowledge to nonwords. Next, we consider the nature of semantic knowledge, and see how a self-organizing model can encode word meaning in terms of the statistics of word co-occurrence, as developed in the Latent Semantic Analysis (LSA) model. Finally, we explore syntax at the level of sentences in the Sentence Gestalt model, where syntactic and semantic information are integrated over time to form a "gestalt" like understanding of sentence meaning.